A data lake is like the junk drawer in your kitchen. A place where you collect valuable stuff that you know you will need later but don’t have the time or need to organize today. Sometimes, you can even forget what is in there and be surprised by the joy of finding just the right doohickey in that drawer to solve your problem. But you need a data collection tool like Splunk, SIEM, or ELK Stack to act as your Rosetta stone to parse, organize, and normalize your data for human consumption and make it actionable. But even after structuring the data, how do you realize its value?

What is a Data Lake?

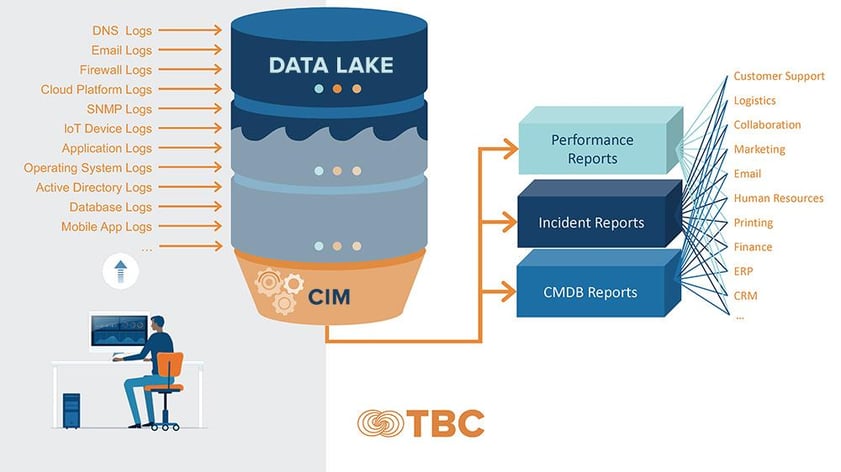

A data lake is a centralized data repository where all your data lives. Your data lake is your source of truth--ingesting and storing datasets from across your enterprise. Raw data streams into the lake from all the devices in your IT landscape--from software, hardware, network, systems, and your CRM.

Whether raw and unstructured or semi-structured, your data needs to be filtered, organized, and prioritized to make it useful – a complex job due to the different data sources and formats. Whether you need that data today or tomorrow, it resides in the lake, ready to be queried and analyzed.

|

Making Cents of the Data

The first reality is that data is essential to your business. The second is that it's cumbersome, costly to store, and challenging to implement effective data lifecycle management. But if you intend to mine the value of business intelligence locked in your data lake, you must first structure your data and filter out the noise with advanced analytics.

Are you ingesting the correct data? Not all data is created equal—you needn't store everything. But before you purge, you must understand what to throw away and what can help you make intelligent business decisions.

Valuable data:

· Meets defined business goals

· Adds visibility to your processes

· Is accurate and secure

· Is ready in a readable format

· Follows a data governance framework

· Is relevant to your business

You must remove data siloes and make that data consumable to stakeholders. And the only way to do this is to use analytics tools that can humanize the data to make it readable and sharable.

The value of the data is in the analysis and reporting and the business's ability to integrate that intelligence to inform decision-making. If you can't make sense of the data and utilize what you are ingesting, you will miss revenue opportunities and opportunities to improve productivity and performance metrics.

Using the right analytics tools and processes can save time and money by allowing engineers to analyze security logs, find the root cause of issues quicker, and reduce the mean time to restoration—allowing engineers to be proactive rather than reactive, understand the frequency and size of anomalies or errors and get in front of harmful data events. The value of data and reporting is high, and it's easier to map to ROI when managed on dashboards, meets compliance standards, is secure, consumable, stable, and evolves with the organization's demands

Service Intelligence with ITSM

How is Information Technology Service Management (ITSM) connected to data lakes? While ITSM is often maligned as ‘just a ticketing service,’ its actual value lies in bringing service intelligence and structure to business operations, improving IT performance, and integrating service automation for higher resource efficiencies.

ITSM is an enabler of business operations and a driver of IT optimization. ITSM tools align service delivery to business strategy and rely heavily on the consumability of data to inform operations decision-making. But data is ITSM’s core dependency, and ingesting unmolested data from the data lake fuels the relevancy of ITSM processes for business success.

ITSM uses a Common Information Model (CIM) to map the data you are already gathering from multiple logs to generate CMDB, Incident, and Performance reports. CIM enables data searchability and the creation of a unified data set to inform all aspects of business functionality.

Query-based dashboards in tools like Splunk work in unison with the ITSM platform to bring visibility and real-time health checks to your IT environment. The data lake is continuously monitored and scanned for anomalies, and ITSM service alerts can be automatically triggered when anomalies or deviations occur.

This synchronization is essential to streamline operations and fix incidents faster and with the correct information. No more chasing empty leads because you can create a query and drill down to specifics—searching for granular data like username, job name, or event in the data lake—giving you the information to fix the root cause of the problem at the source.

ITSM gives you control over your responses and service intelligence to inform Incident, Problem, and Change management based on relevant data. Because the data is structured and consumable, it gives deeper insight into your IT environment to see patterns, vulnerabilities, and business gaps and shapes a methodology for continuous improvement.

Accelerate Your Time to Value with TBC

TBC is a business-centric IT Solutions Provider based in Phoenix, Arizona, with over 26 years of managed services experience. Our data architects and engineers work closely with our ITSM developers to consolidate your data in a way that makes the most sense to your business.

TBC’s data experts will manage your cloud-data center–edge orchestration and provide focused monitoring of your data and IT environment. We will improve the value of your data platform licensing with managed implementation and include upgrades and maintenance for performance optimization. We can accelerate your time to value by making your data secure, resilient, compliant, and available.

.png)